Autonomous Robotic Manipulation

Modeling Top-Down Saliency for Visual Object Search

Interactive Perception

State Estimation and Sensor Fusion for the Control of Legged Robots

Probabilistic Object and Manipulator Tracking

Global Object Shape Reconstruction by Fusing Visual and Tactile Data

Robot Arm Pose Estimation as a Learning Problem

Learning to Grasp from Big Data

Gaussian Filtering as Variational Inference

Template-Based Learning of Model Free Grasping

Associative Skill Memories

Real-Time Perception meets Reactive Motion Generation

Autonomous Robotic Manipulation

Learning Coupling Terms of Movement Primitives

State Estimation and Sensor Fusion for the Control of Legged Robots

Inverse Optimal Control

Motion Optimization

Optimal Control for Legged Robots

Movement Representation for Reactive Behavior

Associative Skill Memories

Real-Time Perception meets Reactive Motion Generation

Motion Blur in Layers

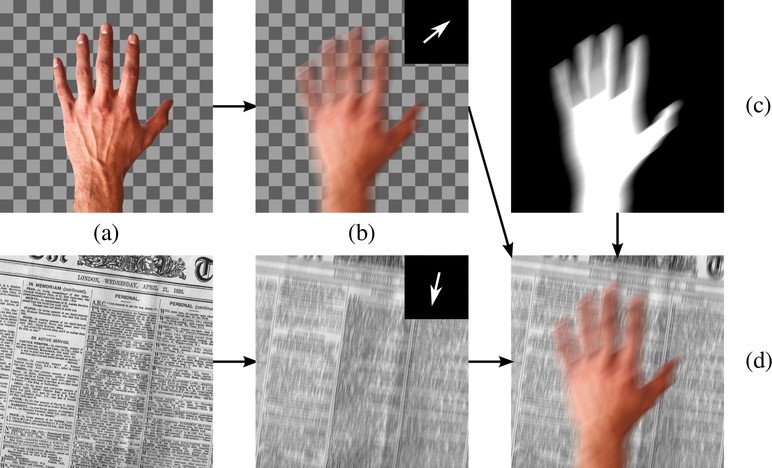

Videos contain complex spatially-varying motion blur due to the combination of object motion, camera motion, and depth variation with finite shutter speeds. Existing methods to estimate optical flow, deblur the images, and segment the scene fail in such cases. In particular, boundaries between differently moving objects cause problems, because here the blurred images are a combination of the blurred appearances of multiple surfaces. We address this with a novel layered model of scenes in motion. From a motion-blurred video sequence, we jointly estimate the layer segmentation and each layer’s appearance and motion. Since the blur is a function of the layer motion and segmentation, it is completely determined by our generative model. Given a video, we formulate the optimization problem as minimizing the pixel error between the blurred frames and images synthesized from the model, and solve it using gradient descent. We demonstrate our approach on synthetic and real sequences.

Video

Members

Publications