Autonomous Robotic Manipulation

Modeling Top-Down Saliency for Visual Object Search

Interactive Perception

State Estimation and Sensor Fusion for the Control of Legged Robots

Probabilistic Object and Manipulator Tracking

Global Object Shape Reconstruction by Fusing Visual and Tactile Data

Robot Arm Pose Estimation as a Learning Problem

Learning to Grasp from Big Data

Gaussian Filtering as Variational Inference

Template-Based Learning of Model Free Grasping

Associative Skill Memories

Real-Time Perception meets Reactive Motion Generation

Autonomous Robotic Manipulation

Learning Coupling Terms of Movement Primitives

State Estimation and Sensor Fusion for the Control of Legged Robots

Inverse Optimal Control

Motion Optimization

Optimal Control for Legged Robots

Movement Representation for Reactive Behavior

Associative Skill Memories

Real-Time Perception meets Reactive Motion Generation

Pose and Motion Priors

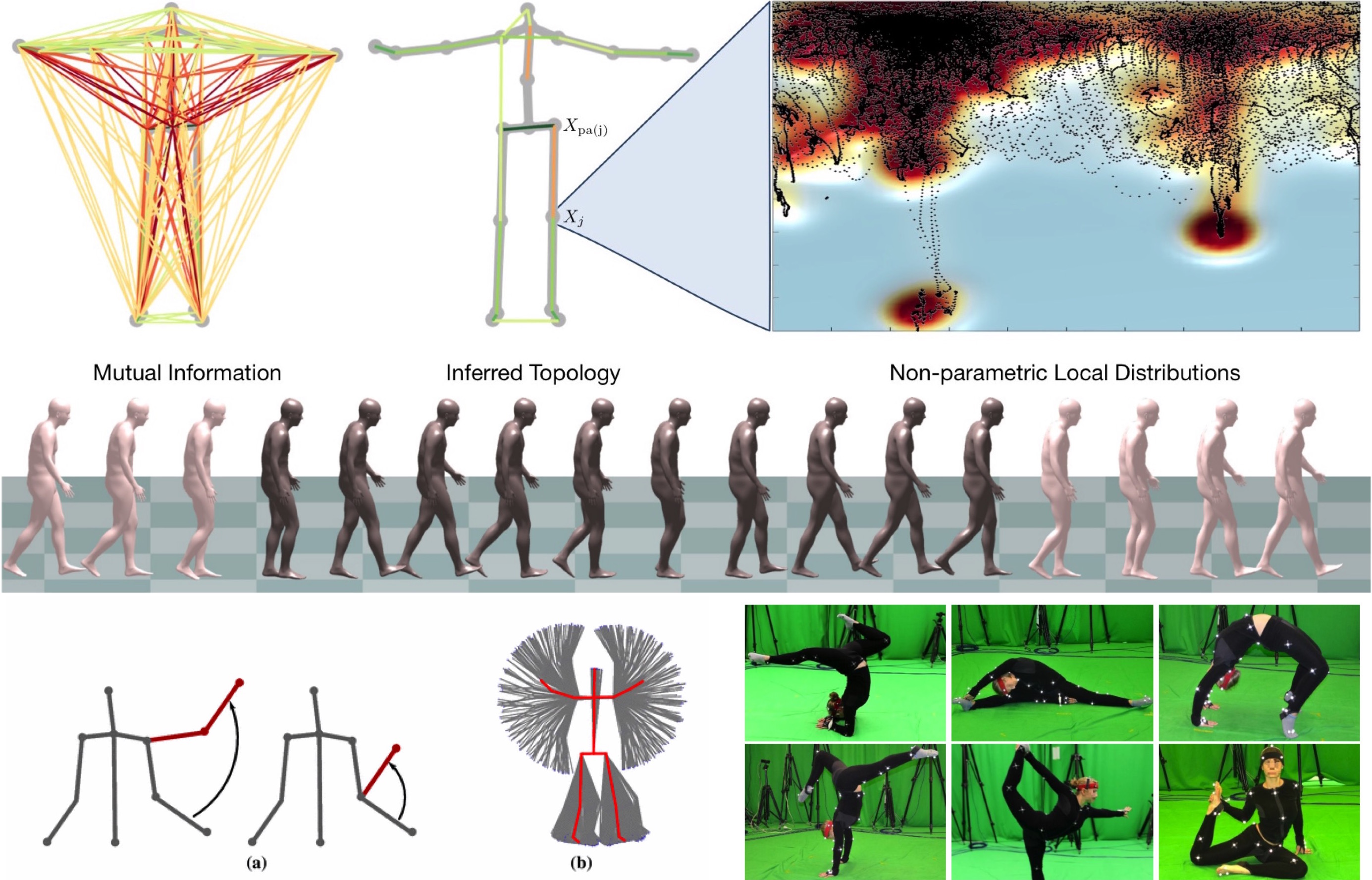

A prior over human pose is important for many human tracking and pose estimation problems.

We introduce a sparse Bayesian network model of human pose that is non-parametric with respect to the estimation of both its graph structure and its local distributions []. Using an efficient sampling scheme, we tractably compute exact log-likelihoods. The model is compositional, representing poses not present in the training set. It remains useful for real-time inference despite being non-parametric.

Action recognition and pose estimation are closely related topics; information from one task can be leveraged to assist the other, yet the two are often treated separately. In [] we develop a framework for coupled action recognition and pose estimation by formulating pose estimation as an optimization over a set of action-specific manifolds. The framework allows for integration of a 2D appearance-based action recognition system as a prior for 3D pose estimation and for refinement of the action labels using relational pose features based on the extracted 3D poses.

Modeling distributions over human poses requires a distance measure between human poses; this is often taken to be the Euclidean distance between joint angle vectors. In [] we present an algorithm for computing geodesics in the Riemannian space of joint positions, as well as a fast approximation that allows for large-scale analysis. Articulated tracking systems can be improved by replacing the standard distance with the geodesic distance in the space of joint positions. This measure significantly outperforms the traditional measure in classification, clustering and dimensionality reduction tasks.

To better model human pose we collected a new motion capture dataset of extreme poses [] that is available to the public.

Members

Publications