Reinforcement Learning and Control

Model-based Reinforcement Learning and Planning

Object-centric Self-supervised Reinforcement Learning

Self-exploration of Behavior

Causal Reasoning in RL

Equation Learner for Extrapolation and Control

Intrinsically Motivated Hierarchical Learner

Regularity as Intrinsic Reward for Free Play

Curious Exploration via Structured World Models Yields Zero-Shot Object Manipulation

Natural and Robust Walking from Generic Rewards

Goal-conditioned Offline Planning

Offline Diversity Under Imitation Constraints

Learning Diverse Skills for Local Navigation

Learning Agile Skills via Adversarial Imitation of Rough Partial Demonstrations

Combinatorial Optimization as a Layer / Blackbox Differentiation

Object-centric Self-supervised Reinforcement Learning

Symbolic Regression and Equation Learning

Representation Learning

Stepsize adaptation for stochastic optimization

Probabilistic Neural Networks

Learning with 3D rotations: A hitchhiker’s guide to SO(3)

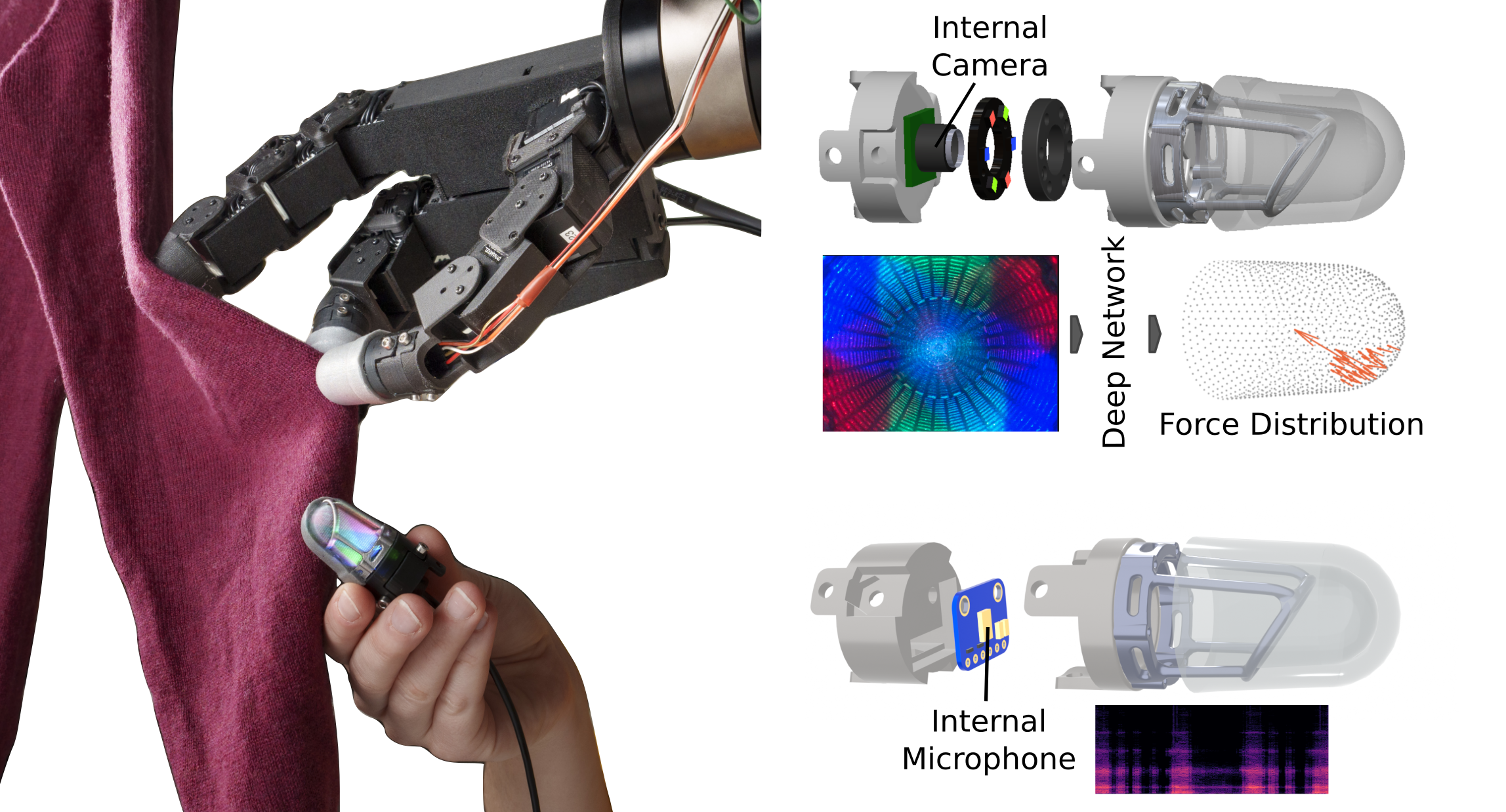

Giving Touch to Soft Robot Fingertips Using Vision, Audio and Machine Learning

Autonomous robots have the potential to become dexterous and work flexibly together with humans. To achieve this goal, their hardware needs to become more robust and provide richer sensory feedback, while their learning algorithms need to become more data-efficient and safety-aware. A clear shortcoming of current commodity robotic hardware is the complete lack or low quality of the tactile sensations it can acquire. In contrast, humans have a rich sense of touch and use it constantly-mostly subconsciously. In fact, if human haptic perception is impaired, dexterous manipulation becomes very challenging or even impossible. High-resolution haptic sensing similar to the human fingertip can enable robots to execute delicate manipulation tasks like picking up small objects, inserting a key into a lock, or handing a full cup of coffee to a human.

This project aims to extend the capabilities of robotic manipulation by creating learning-based tactile sensors to capture the rich components of touch information. For this goal, we explore vision-based and audio-based technologies processed with machine learning to create fast and robust touch sensing. As part of this project, we present Minsight [], a fingertip-sized vision-based tactile sensor based on the Insight technology [

], capable of sensing forces on its omnidirectional sensing surface down to 0.05 N with an update rate of 60 Hz. This approach uses a camera to monitor changes in internal light intensity and/or color caused by deformations of the sensor's surrounding material due to external contact forces. The centrally positioned sensing component, a camera, does not bear the contact load, which ensures the high durability of the sensor.

We investigate, how Minsight's high-resolution tactile information can be used by learning-based processing methods to create robust manipulation strategies. We are furthermore looking into extending high-resolution vision-based sensing with an additional modality to capture also the high-frequency temporal aspects of touch, which are useful to feel surface textures or fabrics. To do so, we created a microphone-based twin sensor to Minsight, which captures audio with a bandwidth of 50 Hz to 15 kHz and use both of them for dynamic exploration of fabrics with a robot hand.

This research project involves collaborations with Prof. Georg Martius (University of Tübingen) and Laura Schiller (University of Tübingen).

Members

Publications