Human Pose, Shape and Action

3D Pose from Images

2D Pose from Images

Beyond Motion Capture

Action and Behavior

Body Perception

Body Applications

Pose and Motion Priors

Clothing Models (2011-2015)

Reflectance Filtering

Learning on Manifolds

Markerless Animal Motion Capture

Multi-Camera Capture

2D Pose from Optical Flow

Body Perception

Neural Prosthetics and Decoding

Part-based Body Models

Intrinsic Depth

Lie Bodies

Layers, Time and Segmentation

Understanding Action Recognition (JHMDB)

Intrinsic Video

Intrinsic Images

Action Recognition with Tracking

Neural Control of Grasping

Flowing Puppets

Faces

Deformable Structures

Model-based Anthropometry

Modeling 3D Human Breathing

Optical flow in the LGN

FlowCap

Smooth Loops from Unconstrained Video

PCA Flow

Efficient and Scalable Inference

Motion Blur in Layers

Facade Segmentation

Smooth Metric Learning

Robust PCA

3D Recognition

Object Detection

Causal Reasoning in RL

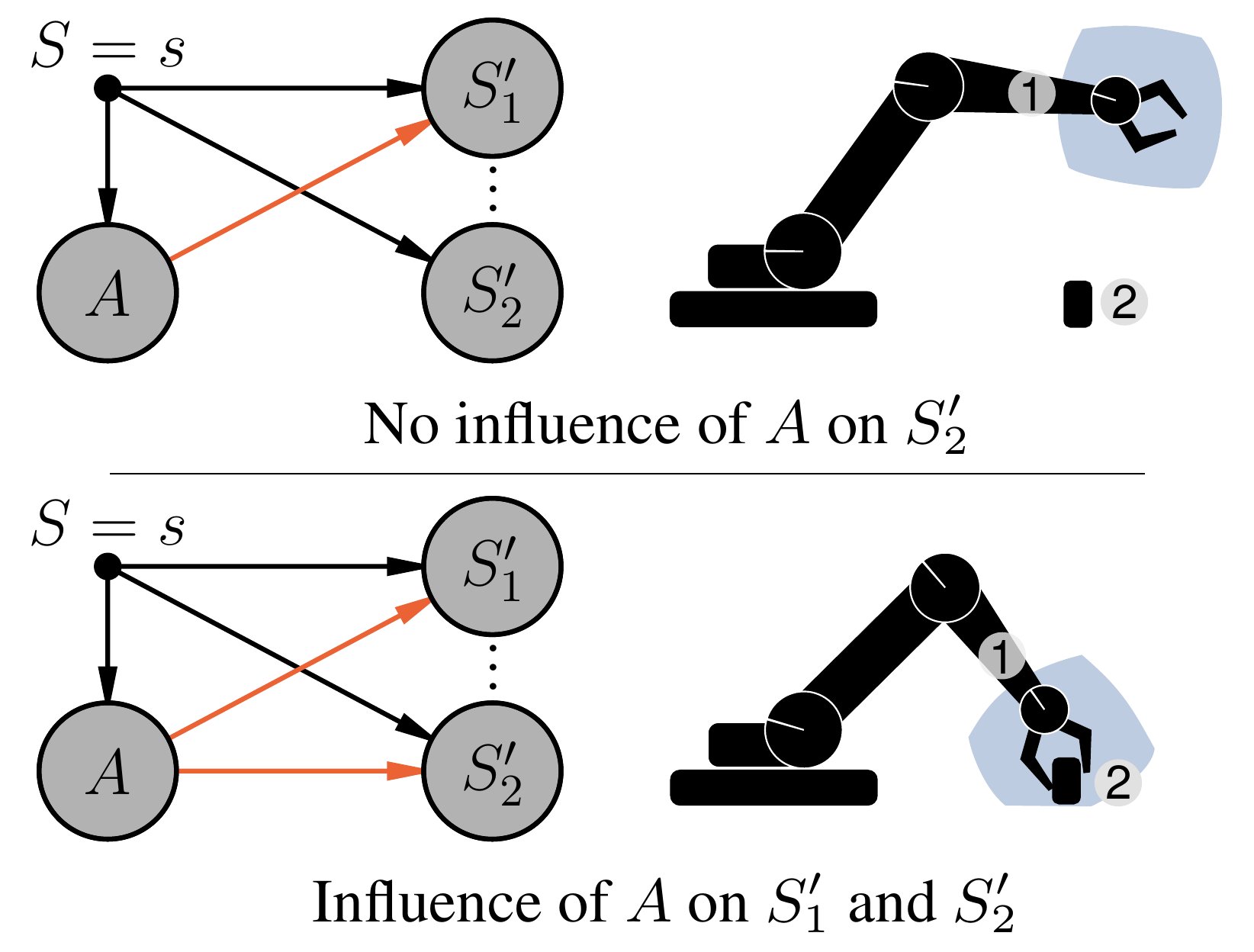

Reinforcement learning is fundamentally a causal endeavor. The agent intervenes in the environment through actions and observes their effects; learning through credit assignment involves the counterfactual question whether another action would have resulted in a better outcome. Causal Reasoning for RL is about learning and using causal knowledge to improve RL algorithms. We are interested in using tools from causality to make RL algorithms more robust and sample efficient.

In a first project [, we showed how learning a causal model of agent-object interactions allows to infer whether the agent has causal influence on its environment. We then demonstrated how this information can be integrated into RL algorithms to steer the exploration process and improve sample-efficiency of off-policy training. Experiments on robotic manipulation benchmarks show a clear improvement over state-of-the-art.]

Members

Publications