Quantifying the Quality of Haptic Interfaces

Shape-Changing Haptic Interfaces

Generating Clear Vibrotactile Cues with Magnets Embedded in a Soft Finger Sheath

Salient Full-Fingertip Haptic Feedback Enabled by Wearable Electrohydraulic Actuation

Cutaneous Electrohydraulic (CUTE) Wearable Devices for Pleasant Broad-Bandwidth Haptic Cues

Modeling Finger-Touchscreen Contact during Electrovibration

Perception of Ultrasonic Friction Pulses

Vibrotactile Playback for Teaching Sensorimotor Skills in Medical Procedures

CAPT Motor: A Two-Phase Ironless Motor Structure

4D Intraoperative Surgical Perception: Anatomical Shape Reconstruction from Multiple Viewpoints

Visual-Inertial Force Estimation in Robotic Surgery

Enhancing Robotic Surgical Training

AiroTouch: Naturalistic Vibrotactile Feedback for Large-Scale Telerobotic Assembly

Optimization-Based Whole-Arm Teleoperation for Natural Human-Robot Interaction

Finger-Surface Contact Mechanics in Diverse Moisture Conditions

Computational Modeling of Finger-Surface Contact

Perceptual Integration of Contact Force Components During Tactile Stimulation

Dynamic Models and Wearable Tactile Devices for the Fingertips

Novel Designs and Rendering Algorithms for Fingertip Haptic Devices

Dimensional Reduction from 3D to 1D for Realistic Vibration Rendering

Prendo: Analyzing Human Grasping Strategies for Visually Occluded Objects

Learning Upper-Limb Exercises from Demonstrations

Minimally Invasive Surgical Training with Multimodal Feedback and Automatic Skill Evaluation

Efficient Large-Area Tactile Sensing for Robot Skin

Haptic Feedback and Autonomous Reflexes for Upper-limb Prostheses

Gait Retraining

Modeling Hand Deformations During Contact

Intraoperative AR Assistance for Robot-Assisted Minimally Invasive Surgery

Immersive VR for Phantom Limb Pain

Visual and Haptic Perception of Real Surfaces

Haptipedia

Gait Propulsion Trainer

TouchTable: A Musical Interface with Haptic Feedback for DJs

Exercise Games with Baxter

Intuitive Social-Physical Robots for Exercise

How Should Robots Hug?

Hierarchical Structure for Learning from Demonstration

Fabrication of HuggieBot 2.0: A More Huggable Robot

Learning Haptic Adjectives from Tactile Data

Feeling With Your Eyes: Visual-Haptic Surface Interaction

S-BAN

General Tactile Sensor Model

Insight: a Haptic Sensor Powered by Vision and Machine Learning

Intuitive Social-Physical Robots for Exercise

Physical activity plays a critical role in maintaining one's health. Past research has shown that well-designed robots can motivate users to perform regular exercise and physical therapy.

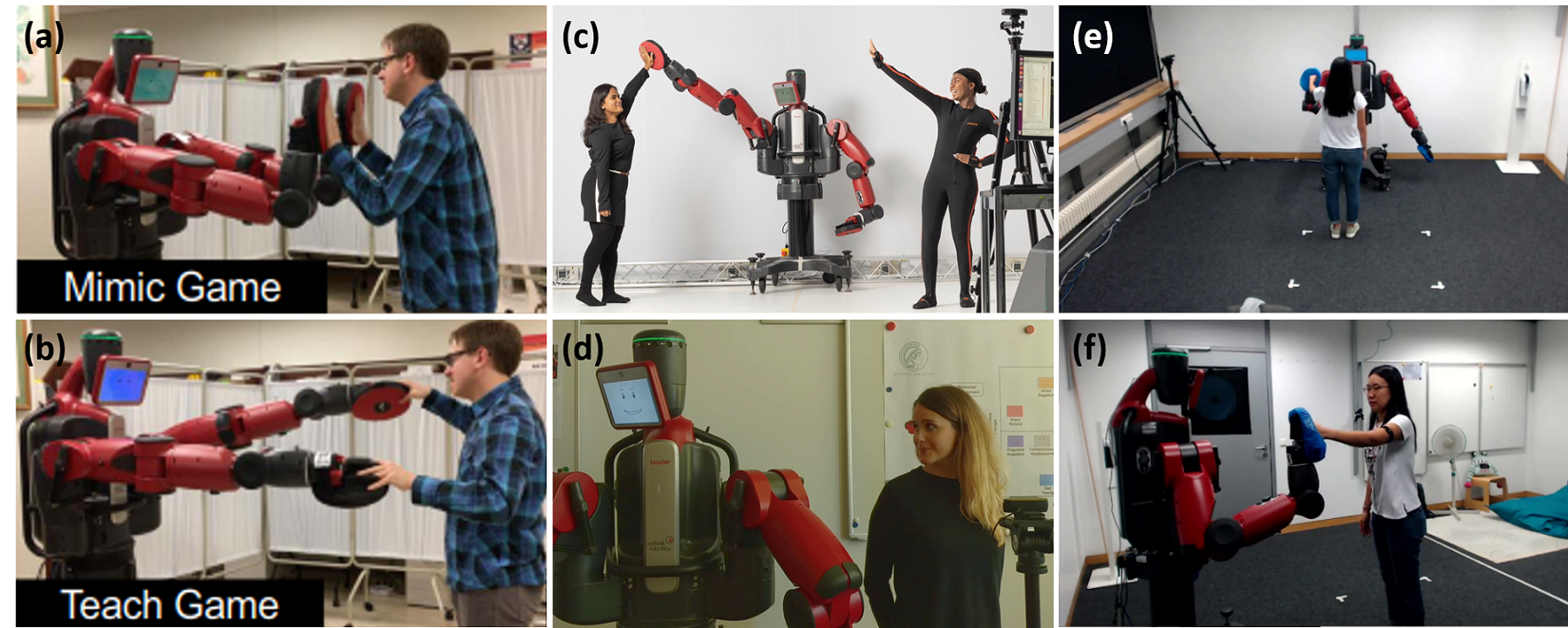

To understand how people respond to exercise-based interactions with a robot, we developed eight exercise games for the Rethink Robotics Baxter Research Robot. These games were developed with the input and guidance of experts in game design, therapy, and rehabilitation, and via extensive pilot testing []. Results from our game evaluation study with 20 younger and 20 older adults support the potential use of bimanual humanoid robots for social-physical exercise interactions [

]. However, these robots must have customizable behaviors and end-user monitoring capabilities to be viable in real-world scenarios.

Novel robot behaviors can be created using teleoperation. We are creating an interface that allows a therapist operator to control Baxter in a physically and emotionally expressive manner []. Baxter's arm and head movements are based on measurements from Xsens, an inertial motion-capture suit worn by the operator. Ongoing work centers on optimization-based kinematic retargeting in real time. The robot's head and face can simultaneously be teleoperated via emotion recognition on a video of the operator.

The end-users of our robotic exercise coach will be monitored via the Robot Interaction Studio, a platform for enabling minimally supervised human-robot interaction. This system combines Captury Live, a real-time markerless motion-capture system, with a ROS-compatible robot to estimate user actions in real time and provide corrective feedback []. We evaluated this platform via a user study where Baxter sequentially presented the user with three gesture-based cues in randomized order [

]. Without instructions, we found that the users tended to explore the interaction workspace, mimic Baxter, and interact with Baxter's hands.

Our next step is to enable experts in exercise therapy to prototype a wide range of social-physical interactions for Baxter using our teleoperation interface []. These teleoperated behaviors can be recorded and then autonomously learned and repeated. We envision scenarios in a community rehabilitation center where a robot in the Robot Interaction Studio acts as an exercise partner or coach for an end-user.

Members

Publications