Quantifying the Quality of Haptic Interfaces

Shape-Changing Haptic Interfaces

Generating Clear Vibrotactile Cues with Magnets Embedded in a Soft Finger Sheath

Salient Full-Fingertip Haptic Feedback Enabled by Wearable Electrohydraulic Actuation

Cutaneous Electrohydraulic (CUTE) Wearable Devices for Pleasant Broad-Bandwidth Haptic Cues

Modeling Finger-Touchscreen Contact during Electrovibration

Perception of Ultrasonic Friction Pulses

Vibrotactile Playback for Teaching Sensorimotor Skills in Medical Procedures

CAPT Motor: A Two-Phase Ironless Motor Structure

4D Intraoperative Surgical Perception: Anatomical Shape Reconstruction from Multiple Viewpoints

Visual-Inertial Force Estimation in Robotic Surgery

Enhancing Robotic Surgical Training

AiroTouch: Naturalistic Vibrotactile Feedback for Large-Scale Telerobotic Assembly

Optimization-Based Whole-Arm Teleoperation for Natural Human-Robot Interaction

Finger-Surface Contact Mechanics in Diverse Moisture Conditions

Computational Modeling of Finger-Surface Contact

Perceptual Integration of Contact Force Components During Tactile Stimulation

Dynamic Models and Wearable Tactile Devices for the Fingertips

Novel Designs and Rendering Algorithms for Fingertip Haptic Devices

Dimensional Reduction from 3D to 1D for Realistic Vibration Rendering

Prendo: Analyzing Human Grasping Strategies for Visually Occluded Objects

Learning Upper-Limb Exercises from Demonstrations

Minimally Invasive Surgical Training with Multimodal Feedback and Automatic Skill Evaluation

Efficient Large-Area Tactile Sensing for Robot Skin

Haptic Feedback and Autonomous Reflexes for Upper-limb Prostheses

Gait Retraining

Modeling Hand Deformations During Contact

Intraoperative AR Assistance for Robot-Assisted Minimally Invasive Surgery

Immersive VR for Phantom Limb Pain

Visual and Haptic Perception of Real Surfaces

Haptipedia

Gait Propulsion Trainer

TouchTable: A Musical Interface with Haptic Feedback for DJs

Exercise Games with Baxter

Intuitive Social-Physical Robots for Exercise

How Should Robots Hug?

Hierarchical Structure for Learning from Demonstration

Fabrication of HuggieBot 2.0: A More Huggable Robot

Learning Haptic Adjectives from Tactile Data

Feeling With Your Eyes: Visual-Haptic Surface Interaction

S-BAN

General Tactile Sensor Model

Insight: a Haptic Sensor Powered by Vision and Machine Learning

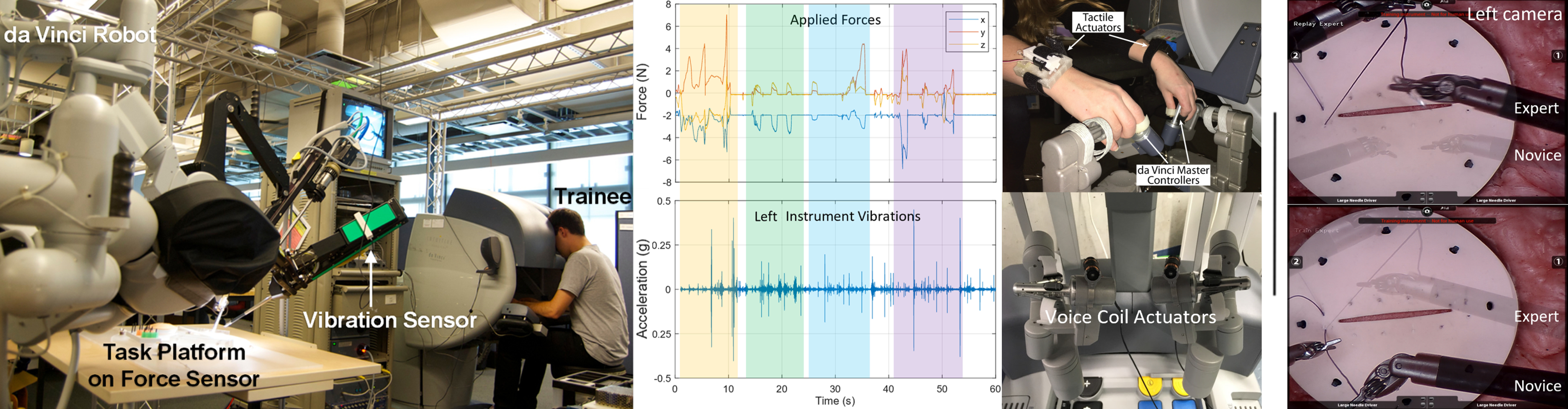

Minimally Invasive Surgical Training with Multimodal Feedback and Automatic Skill Evaluation

Minimally invasive surgery (MIS) allows surgeons to perform procedures through tiny incisions, thus reducing healing time compared to traditional open surgery. In robot-assisted MIS (RMIS), surgeons control the instruments from a console. Consequently, besides the advantages of using a robotic platform, RMIS has one main drawback: the lack of haptic feedback, which is critical in manipulation tasks.

We explored the effects of haptic feedback while training in RMIS. First, we investigated vibrotactile feedback using VerroTouch, a previously developed system that allows the surgeon to feel the instruments’ vibrations at the operator’s manipulators. The vibrotactile feedback did not increase or decrease the trainee's workload []; ongoing work is examining impacts on learning. We also explored force feedback and created bracelets that squeeze the operator’s wrists proportionally to the force applied by the surgical instruments. Participants applied significantly less force on the task materials when receiving the feedback [

].

We identified two additional challenges when training in RMIS: the quantitative evaluation of surgical skills and the limits of existing simulators.

Currently, surgical skill assessment is mainly conducted through manual video evaluation, which is time-consuming and subject to bias. For MIS, we developed a motion-tracking system and machine-learning algorithm to evaluate trainee performance during suturing tasks. The automatic ratings closely matched human expert ratings []. For RMIS, we showed that surgical skill can be estimated through completion time, force applied to task materials, and vibrations generated at the surgical instruments [

].

Finally, training with physical simulators requires experts to supervise the process. In RMIS, using a robotic console offers the possibility of evaluating surgical skills in real time and training in virtual and augmented reality. However, current virtual simulators result in lower skill transfer to actual surgeries. As such, we hypothesize that augmented reality simulators could combine the advantages of physical and virtual approaches. After an early prototype [], our final developed platform allows expert surgeons to record surgical procedures with multiple modalities and novice surgeons to replay and train with the multimodal recordings, including visual, auditory, and vibrotactile feedback [

].

Members

Publications