Quantifying the Quality of Haptic Interfaces

Shape-Changing Haptic Interfaces

Generating Clear Vibrotactile Cues with Magnets Embedded in a Soft Finger Sheath

Salient Full-Fingertip Haptic Feedback Enabled by Wearable Electrohydraulic Actuation

Cutaneous Electrohydraulic (CUTE) Wearable Devices for Pleasant Broad-Bandwidth Haptic Cues

Modeling Finger-Touchscreen Contact during Electrovibration

Perception of Ultrasonic Friction Pulses

Vibrotactile Playback for Teaching Sensorimotor Skills in Medical Procedures

CAPT Motor: A Two-Phase Ironless Motor Structure

4D Intraoperative Surgical Perception: Anatomical Shape Reconstruction from Multiple Viewpoints

Visual-Inertial Force Estimation in Robotic Surgery

Enhancing Robotic Surgical Training

AiroTouch: Naturalistic Vibrotactile Feedback for Large-Scale Telerobotic Assembly

Optimization-Based Whole-Arm Teleoperation for Natural Human-Robot Interaction

Finger-Surface Contact Mechanics in Diverse Moisture Conditions

Computational Modeling of Finger-Surface Contact

Perceptual Integration of Contact Force Components During Tactile Stimulation

Dynamic Models and Wearable Tactile Devices for the Fingertips

Novel Designs and Rendering Algorithms for Fingertip Haptic Devices

Dimensional Reduction from 3D to 1D for Realistic Vibration Rendering

Prendo: Analyzing Human Grasping Strategies for Visually Occluded Objects

Learning Upper-Limb Exercises from Demonstrations

Minimally Invasive Surgical Training with Multimodal Feedback and Automatic Skill Evaluation

Efficient Large-Area Tactile Sensing for Robot Skin

Haptic Feedback and Autonomous Reflexes for Upper-limb Prostheses

Gait Retraining

Modeling Hand Deformations During Contact

Intraoperative AR Assistance for Robot-Assisted Minimally Invasive Surgery

Immersive VR for Phantom Limb Pain

Visual and Haptic Perception of Real Surfaces

Haptipedia

Gait Propulsion Trainer

TouchTable: A Musical Interface with Haptic Feedback for DJs

Exercise Games with Baxter

Intuitive Social-Physical Robots for Exercise

How Should Robots Hug?

Hierarchical Structure for Learning from Demonstration

Fabrication of HuggieBot 2.0: A More Huggable Robot

Learning Haptic Adjectives from Tactile Data

Feeling With Your Eyes: Visual-Haptic Surface Interaction

S-BAN

General Tactile Sensor Model

Insight: a Haptic Sensor Powered by Vision and Machine Learning

Super-resolution Sensing for Haptics

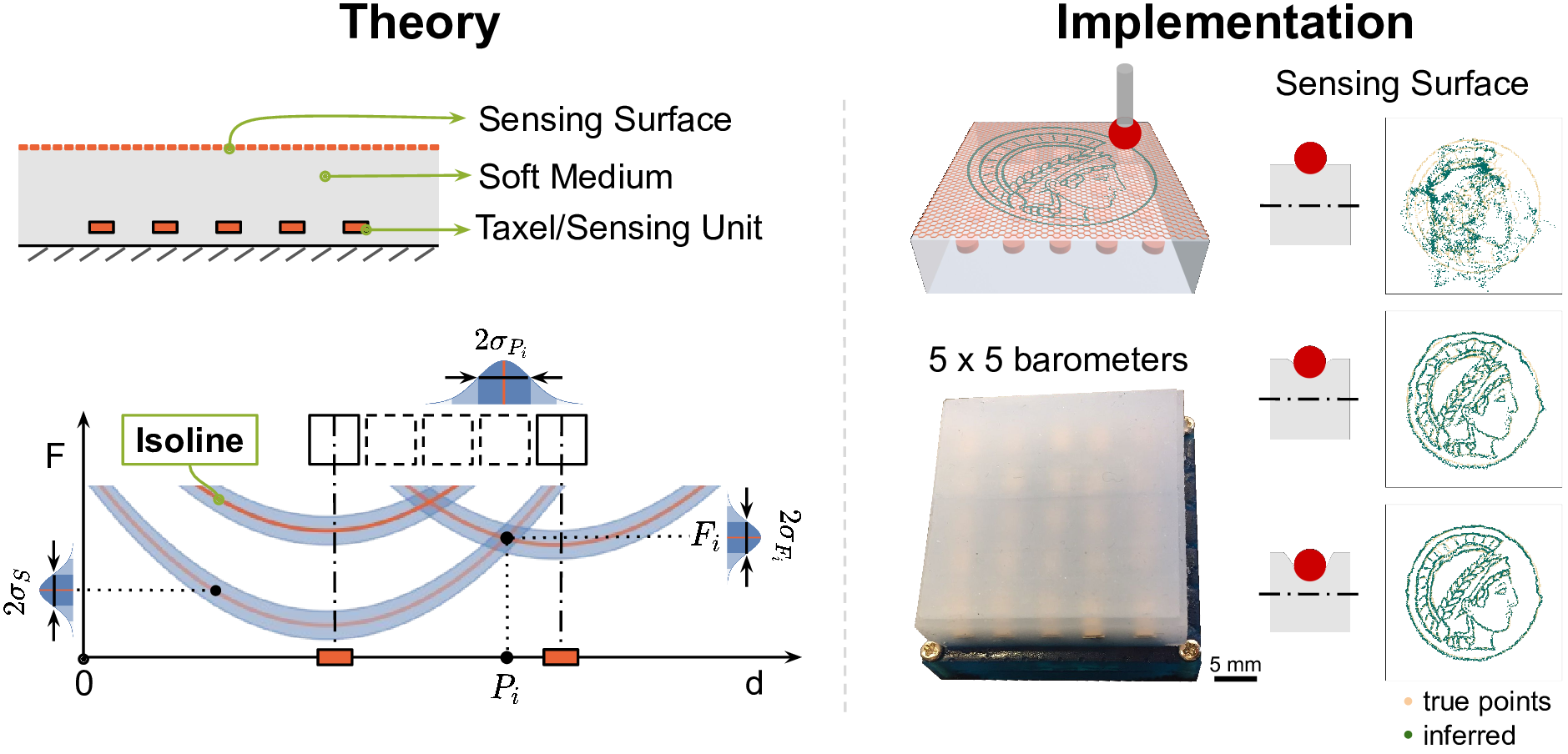

Haptic feedback is essential to make robots more dexterous and effective in unstructured environments. Nevertheless, high-resolution tactile sensors are still not widely available. When using a large number of physical sensing units we often face manufacturing challenges and wiring problems. Such complex hardware can also lead to durability issues as each additional component constitutes a potential point of failure.

We pursue a route towards high-resolution and robust tactile skins by embedding only a few sensor units (taxels) into a flexible surface material and use signal processing to achieve sensing with super-resolution accuracy. We first rely on the empirical knowledge that overlapping multiple taxels' perception fields enables super-resolution behavior and design a sensing system for robotic applications []. We then propose a theory for geometric super-resolution to guide the development of tactile sensors of this kind and link it to machine learning techniques for signal processing []. This theory is based on sensor isolines and allows us to predict force sensitivity and accuracy in contact position and force magnitude as a spatial quantity. We evaluate the influence of different factors, such as elastic properties of the material, structure design, and transduction methods, using finite element simulations and implemented real sensors. Using machine learning methods to infer contact information, our sensors obtain an unparalleled average super-resolution factor of over 1000. Our theory can guide future haptic sensor designs and inform various design choices.

Members

Publications